Our Machine Learning in Linux series focuses on apps that make it easy to experiment with machine learning.

Large Languages Models trained on massive amount of text can perform new tasks from textual instructions. They can generate creative text, solve maths problems, answer reading comprehension questions, and much more.

Text generation web UI is software that offers a web user interface for a variety of large language models such as LLaMA, llama.cpp, GPT-J, OPT, and GALACTICA. It has a lofty objective; to be the AUTOMATIC1111/stable-diffusion-webui of text generation. If you’re unfamiliar with Stable Diffusion web UI, read our review.

Installation

Manually installing Text generation web UI would be very time-consuming. Fortunately, the project provides a wonderful installer script to automate the whole installation process. Download it using wget (or a similar tool).

$ wget https://github.com/oobabooga/text-generation-webui/releases/download/installers/oobabooga_linux.zip

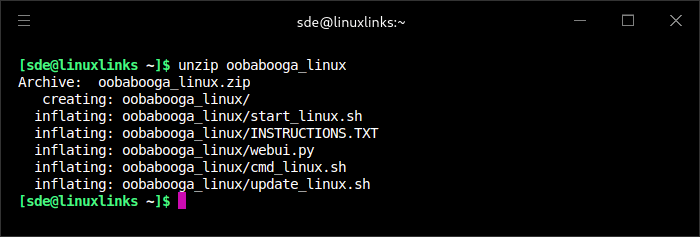

Uncompress the zip file. For example, let’s use unzip:

Change into the newly created directory, make the script executable and run it:

$ cd oobabooga_linux && chmod u+x start_linux.sh && ./start_linux.sh

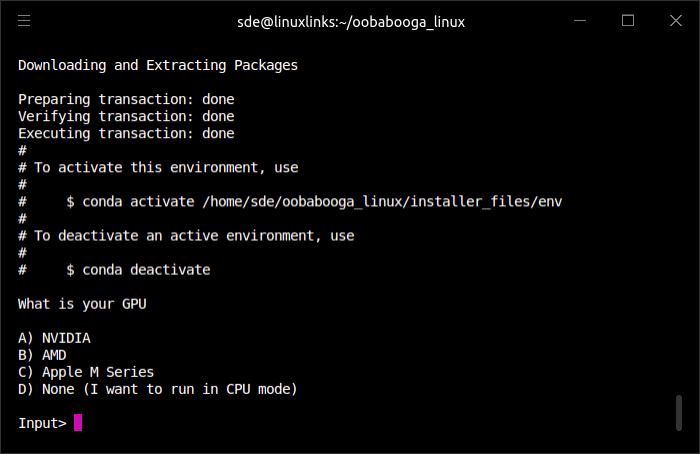

You’re only asked one question throughout the whole installation process:

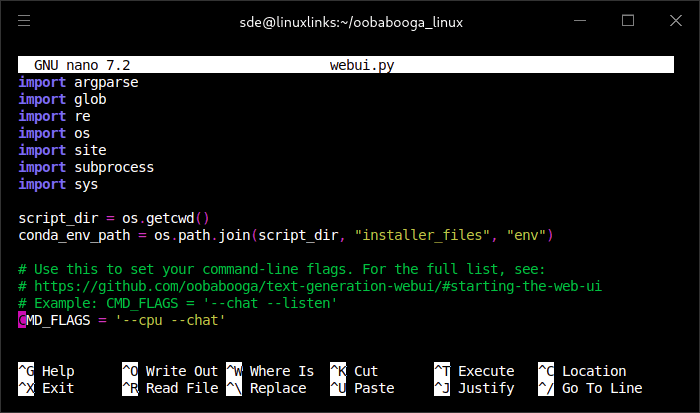

We chose option A as our testing machine hosts an NVIDIA GeForce RTX 3060 Ti graphics card. If your machine doesn’t have a dedicated graphics card, you’ll need to use the CPU mode, so choose option D. If you go with D, once the installation ends, you need to edit webui.py with a text editor and add the --cpu flag to CMD_FLAGS as shown in the image below.

The installation script proceeds to install a whole raft of packages.

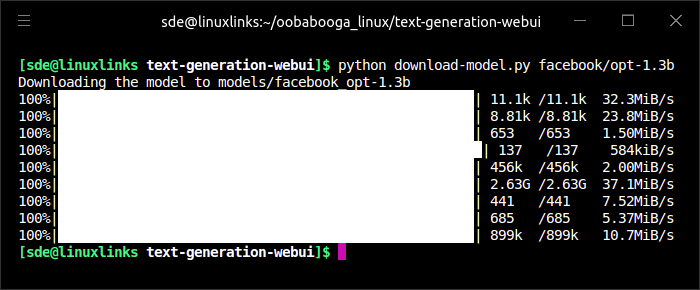

When the installation is complete, you’re told that you’ll need to download a model. Models can be downloaded within the web UI interface from the Model tab, or you can use the download-model.py file (this is stored in the text-generation-webui folder). For example, to download the opt-1.3b model:

Some models are massive downloads.

Next page: Page 2 – In Operation and Summary

Pages in this article:

Page 1 – Introduction and Installation

Page 2 – In Operation and Summary

This software lives up to its hype!